The rise of tools like Clever AI Humanizer underscores a growing arms race in the generative AI ecosystem where content is not only created by machines but also refined to appear human. This shift is reshaping digital trust, with implications for enterprises, educators, regulators, and content platforms worldwide.

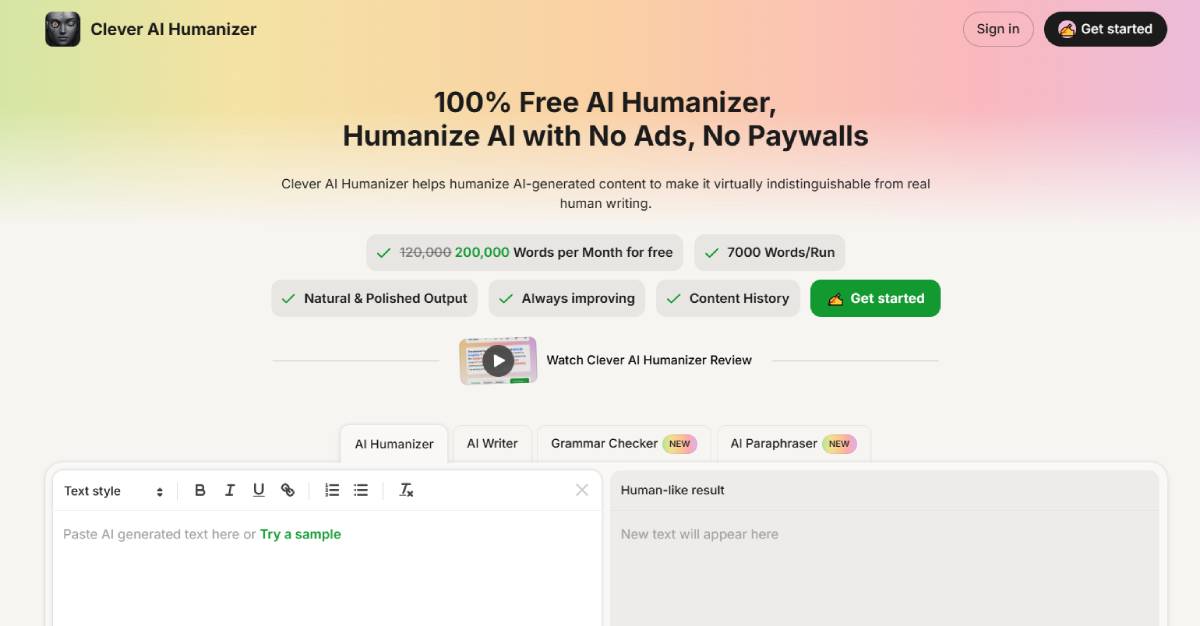

Clever AI Humanizer positions itself as a free tool designed to transform AI-generated text into more natural, human-like language. Its primary value proposition lies in bypassing AI detection systems while improving readability and tone.

The emergence of such tools reflects increasing demand from users seeking to refine outputs from generative AI platforms. Key stakeholders include content creators, marketers, students, and enterprises managing large-scale digital communications.

This trend highlights a growing dual-market dynamic: AI generation tools on one side, and AI detection or humanization tools on the other. The result is an escalating cycle of innovation where each layer attempts to outpace the other in accuracy and effectiveness.

The development aligns with a broader trend across global markets where generative AI adoption has surged across industries, from media and marketing to education and enterprise communications. As AI-generated content becomes more prevalent, concerns around authenticity, originality, and trust have intensified.

In response, a new category of AI detection tools has emerged, aimed at identifying machine-generated text. However, humanization tools are now challenging the reliability of these systems by refining outputs to evade detection.

This dynamic mirrors earlier technological cycles, such as cybersecurity, where offensive and defensive capabilities evolve in tandem. The stakes are particularly high in sectors like education, journalism, and compliance, where distinguishing between human and AI-generated content carries ethical and operational significance.

Industry experts view the rise of humanization tools as both a technological advancement and a governance challenge. Some analysts argue that these tools enhance usability by improving the quality and accessibility of AI-generated content, making it more suitable for professional and consumer use.

Others raise concerns about misuse, particularly in contexts where authenticity is critical, such as academic submissions or regulatory disclosures. Experts warn that widespread use of such tools could undermine trust in digital content ecosystems.

From a policy perspective, the debate is shifting toward transparency rather than detection alone. Thought leaders suggest that watermarking, disclosure standards, and AI content labeling may become necessary to maintain accountability.

The discussion reflects a broader tension between innovation and control in the rapidly evolving AI landscape. For businesses, AI humanization tools could enhance content quality and scalability, enabling more efficient communication strategies. However, they also introduce risks related to brand authenticity, compliance, and reputational integrity.

Investors may see growth opportunities in both AI generation and detection markets, as demand for content verification solutions increases. Meanwhile, enterprises may need to implement stricter governance frameworks around AI usage.

From a policy standpoint, regulators face the challenge of defining acceptable use cases while preventing misuse. This could lead to new standards for AI-generated content disclosure, particularly in sectors such as education, media, and financial communications.

The interplay between AI generation, detection, and humanization is expected to intensify, driving continuous innovation across the ecosystem. Decision-makers should monitor regulatory developments, enterprise adoption patterns, and advances in detection technologies.

As the line between human and malchine-generated content continues to blur, maintaining trust and transparency will become a defining challenge for the next phase of the digital economy.

Source: CleverHumanizer.ai

Date: April 2026