The emergence of platforms like Grubby AI highlights a growing shift in the generative AI landscape, where users are not only creating content with AI but actively modifying it to evade detection. This trend is raising new concerns around digital trust, compliance, and content authenticity across industries.

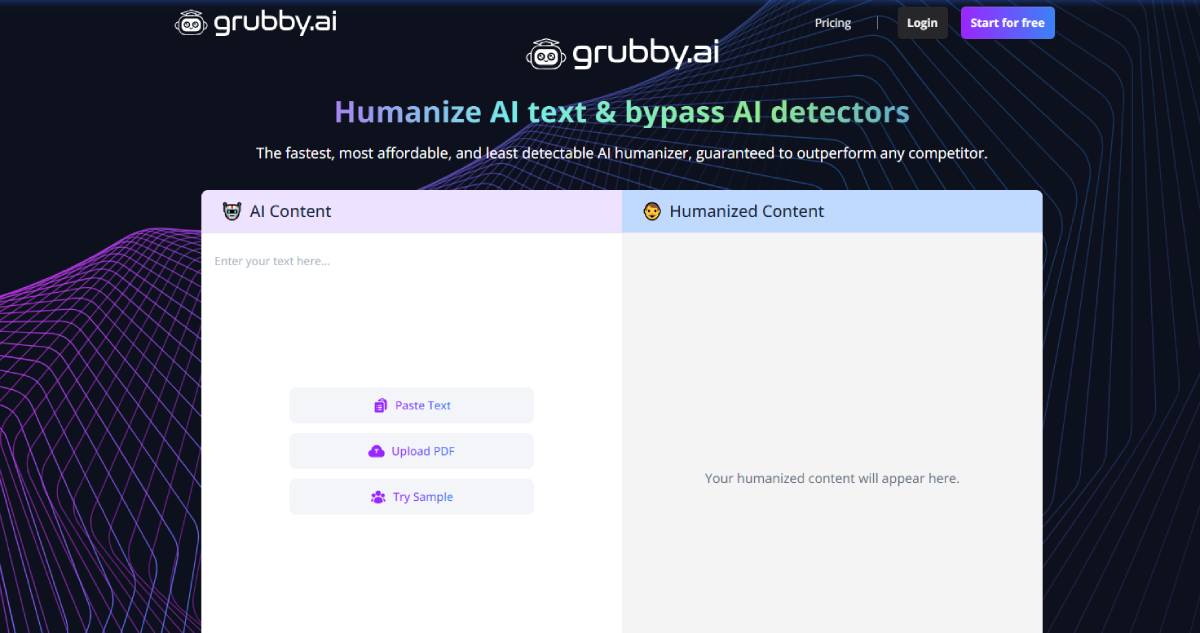

Grubby AI markets itself as an “undetectable AI humanizer,” designed to convert machine-generated text into content that appears indistinguishable from human writing. Its core functionality focuses on improving tone, readability, and variability while reducing the likelihood of detection by AI-checking systems.

The tool reflects increasing demand among content creators, marketers, and students seeking to refine outputs from generative AI platforms. At the same time, it underscores a broader competitive dynamic between AI generation tools and AI detection systems.

Key stakeholders include SaaS providers, enterprises managing digital content, and educational institutions grappling with academic integrity, all of whom are directly impacted by evolving capabilities in AI text manipulation.

The development aligns with a broader trend across global markets where generative AI adoption has rapidly expanded, transforming how content is produced at scale. As AI-generated outputs become more sophisticated, the challenge of distinguishing between human and machine-created content has intensified.

In response, AI detection tools have emerged to safeguard authenticity in areas such as education, journalism, and compliance. However, the rise of humanization tools like Grubby AI introduces a new layer of complexity, effectively challenging the reliability of detection mechanisms.

This dynamic resembles an evolving “arms race” between content generation, detection, and obfuscation technologies. The issue carries significant implications for trust in digital ecosystems, particularly as AI-generated content becomes embedded in critical domains such as financial reporting, legal documentation, and public communication.

Experts are increasingly divided on the implications of undetectable AI tools. Some argue that humanization technologies improve usability by refining raw AI outputs into more natural and engaging content, enhancing productivity for businesses and individuals alike.

Others warn that such tools could undermine transparency and accountability, particularly in regulated or high-stakes environments. Analysts note that widespread adoption may erode confidence in AI detection systems, making it harder to enforce standards in academic and professional settings.

Policy experts suggest that the focus may shift from detection toward disclosure, emphasizing the need for clear labeling of AI-generated content. Industry leaders are also exploring watermarking and traceability solutions as potential safeguards against misuse.

The debate underscores the tension between innovation and governance in the evolving AI content ecosystem. For businesses, tools like Grubby AI offer opportunities to scale content production while maintaining a human-like tone. However, they also introduce risks related to brand credibility, compliance, and ethical use of AI-generated material.

Investors may see increased activity in both AI content creation and verification markets, as demand grows for solutions that balance efficiency with trust. Enterprises may need to implement stricter internal policies governing AI-assisted content workflows.

From a regulatory perspective, governments face mounting pressure to define standards for AI transparency and accountability. This could lead to new frameworks requiring disclosure of AI-generated content, particularly in sectors where authenticity is critical.

The evolution of AI humanization tools is expected to accelerate, further blurring the line between human and machine-generated content. Decision-makers should monitor advancements in detection technologies, regulatory responses, and enterprise adoption patterns. As the ecosystem matures, maintaining trust and transparency will become central to sustaining the value of AI-driven content in global markets.

Source: Grubby.ai

Date: April 2026