A major development unfolded as AI detection platforms like Winston AI gain prominence amid rising concerns over synthetic content. As generative AI adoption accelerates, businesses, educators, and regulators are turning to detection tools to safeguard authenticity signaling a new layer of infrastructure in the global AI economy.

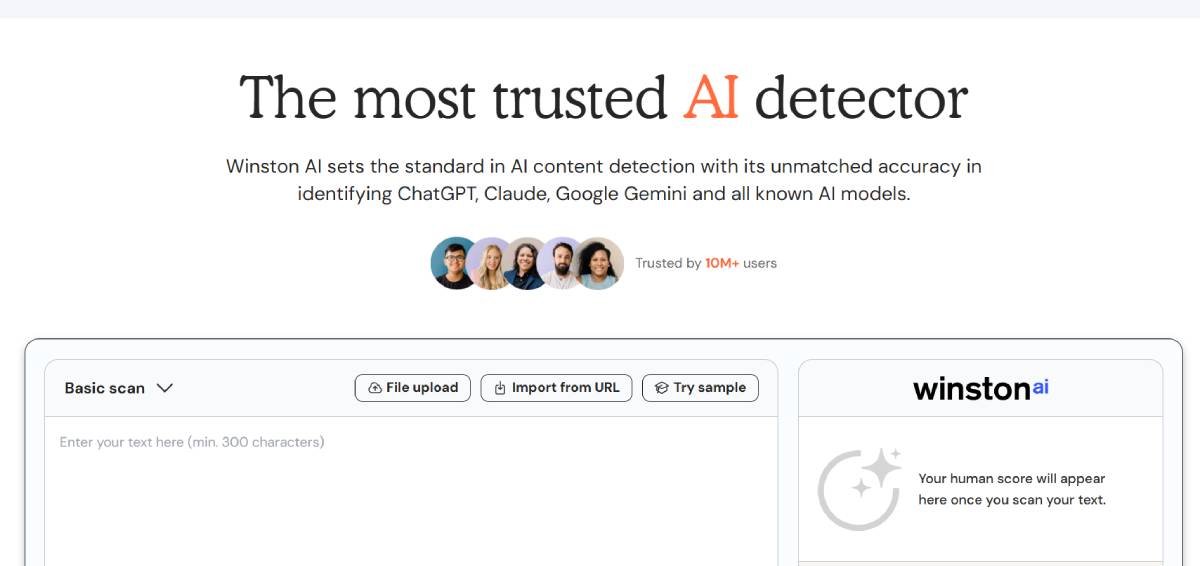

Winston AI has positioned itself as a leading AI content detection platform, offering tools to identify text generated by models such as ChatGPT and other large language systems. The platform targets sectors including education, publishing, and enterprise compliance, where verifying content originality is critical.

The tool uses machine learning algorithms to assess linguistic patterns and probability scores indicating AI generation. It also provides plagiarism checks and integration capabilities for workflows requiring content validation.

As generative AI becomes mainstream, demand for such tools is rising rapidly, with organizations seeking safeguards against misinformation, academic dishonesty, and reputational risks tied to AI-generated outputs.

The development aligns with a broader trend across global markets where the proliferation of generative AI has created parallel demand for verification technologies. As AI-generated text, images, and videos become increasingly indistinguishable from human-created content, trust has emerged as a central challenge.

Historically, digital ecosystems have relied on detection tools such as spam filters and plagiarism checkers to maintain integrity. AI detection represents the next evolution of this paradigm, albeit with greater complexity due to the sophistication of modern models.

At the same time, the effectiveness of AI detectors remains debated. Advances in generative models are making detection more difficult, leading to an ongoing “arms race” between content generation and verification technologies. Governments and institutions are also exploring regulatory frameworks to address AI transparency, further driving demand for reliable detection solutions.

Industry experts suggest that AI detection tools will become essential components of enterprise risk management strategies. Organizations are increasingly concerned about the legal, ethical, and reputational implications of unverified AI-generated content.

However, analysts caution that no detection system is foolproof. False positives and negatives remain a challenge, particularly as AI models evolve rapidly. This has led to calls for multi-layered verification approaches combining detection tools with human oversight.

Educators and publishers have expressed both optimism and skepticism welcoming tools that promote integrity while questioning their reliability in high-stakes scenarios.

From a corporate standpoint, companies like Winston AI emphasize continuous model updates and training to improve accuracy. Still, experts agree that detection technology must evolve in tandem with generative AI to remain effective.

For global executives, the rise of AI detection tools highlights the growing importance of trust infrastructure in digital ecosystems. Businesses may need to integrate verification systems into content workflows to ensure compliance and credibility. Investors could view this segment as an emerging market within the broader AI landscape, with potential growth driven by regulatory requirements and enterprise adoption.

From a policy perspective, governments may mandate disclosure or detection mechanisms for AI-generated content, particularly in sectors like media, education, and finance. For organizations, the challenge lies in balancing efficiency gains from AI with the need for transparency, accountability, and risk mitigation.

Looking ahead, AI detection technologies are expected to evolve alongside generative models, creating a continuous cycle of innovation and countermeasures. Decision-makers should monitor accuracy improvements, regulatory developments, and adoption trends.

Uncertainty remains around long-term effectiveness, but one trend is clear: as AI-generated content scales, the demand for tools that verify authenticity will become a defining feature of the digital economy.

Source: Winston AI

Date: April 2026