A major development is unfolding as Originality.ai advances AI detection tools designed to identify machine-generated content, signaling a critical shift toward trust and verification in the digital economy. The move has far-reaching implications for publishers, enterprises, and regulators navigating the rapid rise of generative AI.

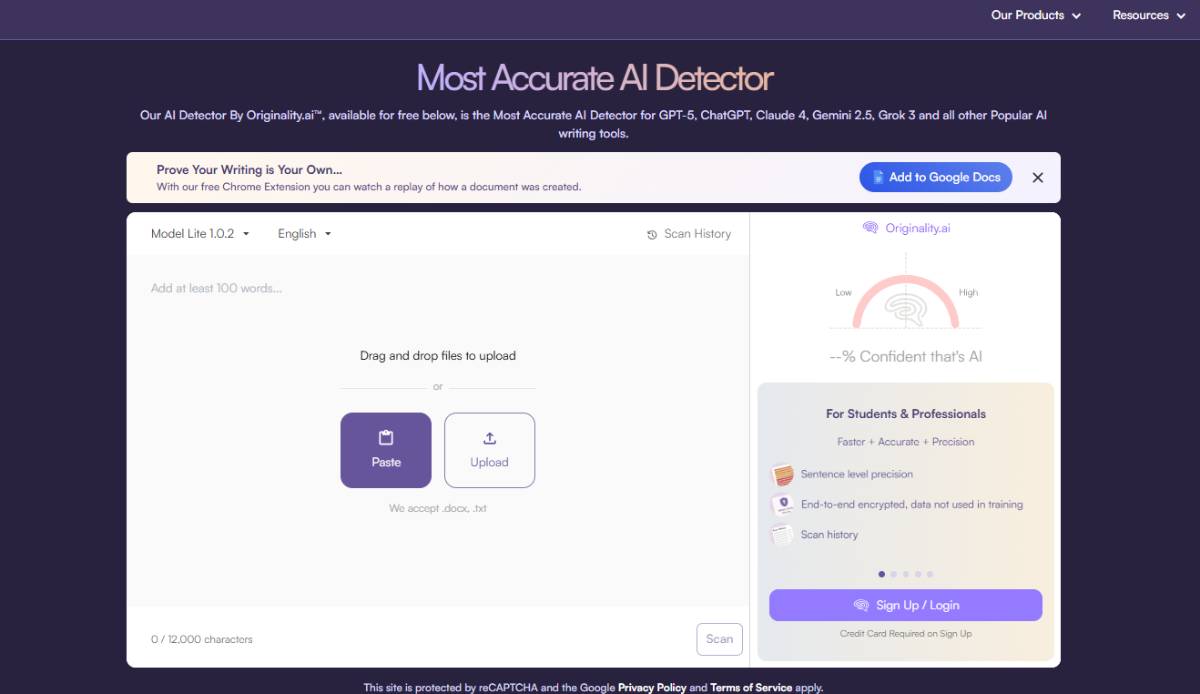

Originality AI offers AI detection technology capable of analyzing text to determine whether it has been generated by artificial intelligence models. The platform targets use cases such as content verification, academic integrity, and quality assurance for digital publishing.

The company provides both free and enterprise-grade tools, enabling businesses to integrate AI detection into their workflows. Its algorithms assess linguistic patterns and probabilistic signals associated with AI-generated text.

As generative AI adoption accelerates, demand for detection tools is rising across sectors concerned with authenticity and compliance. The platform positions itself as a solution to mitigate risks associated with misinformation, plagiarism, and content reliability.

The emergence of platforms like Originality.ai reflects a broader trend in the AI ecosystem, where the proliferation of generative models has created parallel demand for verification technologies. As AI-generated content becomes more sophisticated, distinguishing between human and machine-created material is increasingly challenging.

Industries such as media, education, and marketing are particularly affected, as they rely heavily on content authenticity and credibility. The rapid adoption of large language models has amplified concerns around misinformation, intellectual property, and ethical use of AI.

Historically, similar dynamics were observed with the rise of digital media, where verification tools evolved alongside content creation technologies. Today, AI detection is becoming a foundational component of the digital infrastructure, supporting trust and accountability in an increasingly automated content landscape.

Industry analysts view Originality.ai as part of a growing category of “AI governance tools” that aim to address the unintended consequences of generative AI. Experts note that while detection technologies are improving, they are engaged in an ongoing arms race with increasingly advanced AI models.

Technology leaders emphasize that no detection system is foolproof, highlighting the need for multi-layered approaches that combine technical tools with human oversight and policy frameworks.

Regulatory experts suggest that AI detection could play a key role in compliance, particularly as governments introduce rules around transparency and disclosure of AI-generated content. The consensus is that detection tools will become essential for maintaining trust in digital ecosystems, even as challenges around accuracy and scalability persist.

For businesses, tools like Originality.ai provide a mechanism to ensure content integrity, protect brand reputation, and comply with emerging regulations. Organizations may increasingly adopt such solutions as part of their risk management strategies.

For investors, the growth of AI detection technologies represents a complementary opportunity within the broader AI market, driven by the need for trust and verification.

From a policy perspective, the rise of AI-generated content is prompting governments to consider mandates for disclosure and accountability. Detection tools could become integral to enforcing such regulations and maintaining transparency in digital communications.

The demand for AI detection solutions is expected to grow alongside the expansion of generative AI. Originality.ai and similar platforms will likely continue to innovate in response to evolving AI capabilities. Decision-makers should monitor advancements in both generation and detection technologies, as the balance between creation and verification will shape the future of the digital content economy.

Source: Originality.ai

Date: April 10, 2026