Google has introduced its eighth-generation Tensor Processing Units (TPUs), designed to accelerate the emerging “agentic AI” era where autonomous systems perform complex tasks. The announcement highlights intensifying competition in AI infrastructure and carries significant implications for enterprises, developers, and global cloud markets.

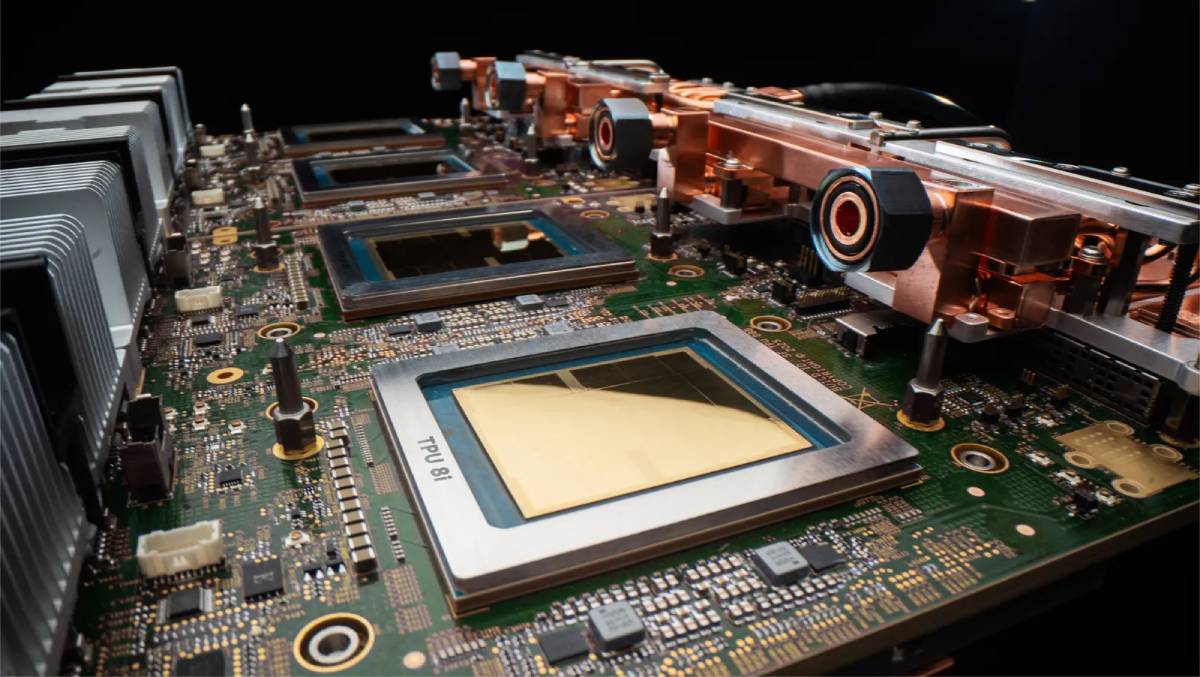

Google revealed two new TPU chips as part of its eighth-generation architecture, optimized for both AI training and inference workloads. These chips are engineered to support increasingly sophisticated AI agents capable of reasoning, planning, and executing multi-step tasks.

The rollout is closely tied to Google Cloud, reinforcing its enterprise AI offerings and infrastructure capabilities. The chips aim to deliver improved performance, efficiency, and scalability compared to previous generations.

The announcement comes amid growing demand for compute power driven by generative and agentic AI applications. It also reflects Google’s ongoing effort to compete with leading chipmakers and cloud providers in the global AI race.

The introduction of next-generation TPUs comes at a time when AI infrastructure has become a critical battleground among technology giants. As AI models grow in complexity, demand for specialized hardware capable of handling large-scale computation has surged.

Google has been a pioneer in custom AI chips, using TPUs internally for services such as search and language models, while also offering them to enterprise clients via its cloud platform. The latest iteration is designed to address the needs of “agentic AI,” a paradigm where systems act autonomously rather than simply responding to prompts.

The development aligns with a broader trend across global markets where companies like NVIDIA and Microsoft are investing heavily in AI infrastructure. This competition is reshaping supply chains, pricing models, and innovation cycles across the semiconductor and cloud industries.

Industry analysts view Google’s latest TPU release as a strategic move to strengthen its position in the AI infrastructure ecosystem. Experts suggest that specialized chips tailored for agentic AI workloads could provide performance advantages over general-purpose GPUs in certain applications.

Technology strategists note that the shift toward autonomous AI systems requires not only advanced models but also optimized hardware capable of supporting continuous decision-making processes. Google’s investment in TPUs reflects an integrated approach combining hardware, software, and cloud services.

While official commentary emphasizes efficiency and scalability, analysts caution that competition remains intense, particularly from established players with strong developer ecosystems. The success of these chips will depend on adoption rates among enterprises and their ability to deliver measurable performance gains.

For businesses, the new TPUs offer potential cost and performance benefits, enabling more advanced AI applications and faster deployment of intelligent systems. Enterprises leveraging cloud-based AI may gain access to improved capabilities without significant upfront infrastructure investments.

For investors, the announcement underscores the importance of AI hardware as a key driver of value creation in the technology sector. Companies that control both hardware and software stacks may hold a competitive edge.

From a policy perspective, the expansion of AI infrastructure raises questions around energy consumption, supply chain resilience, and technological sovereignty, prompting governments to evaluate strategies for supporting domestic capabilities.

Looking ahead, the adoption of Google’s eighth-generation TPUs will be closely monitored as enterprises scale agentic AI applications. Competitive dynamics in AI hardware are expected to intensify, with innovation cycles accelerating across the industry. For decision-makers, infrastructure choices will play a critical role in determining long-term AI competitiveness and operational efficiency.

Source: Google Blog

Date: April 23, 2026