A growing online trend is seeing millions of people impersonate AI chatbots for entertainment, highlighting a cultural shift in how humans engage with artificial intelligence. The phenomenon reflects rising familiarity with AI platforms and AI frameworks, with implications for digital identity, user trust, and the evolving relationship between humans and machines.

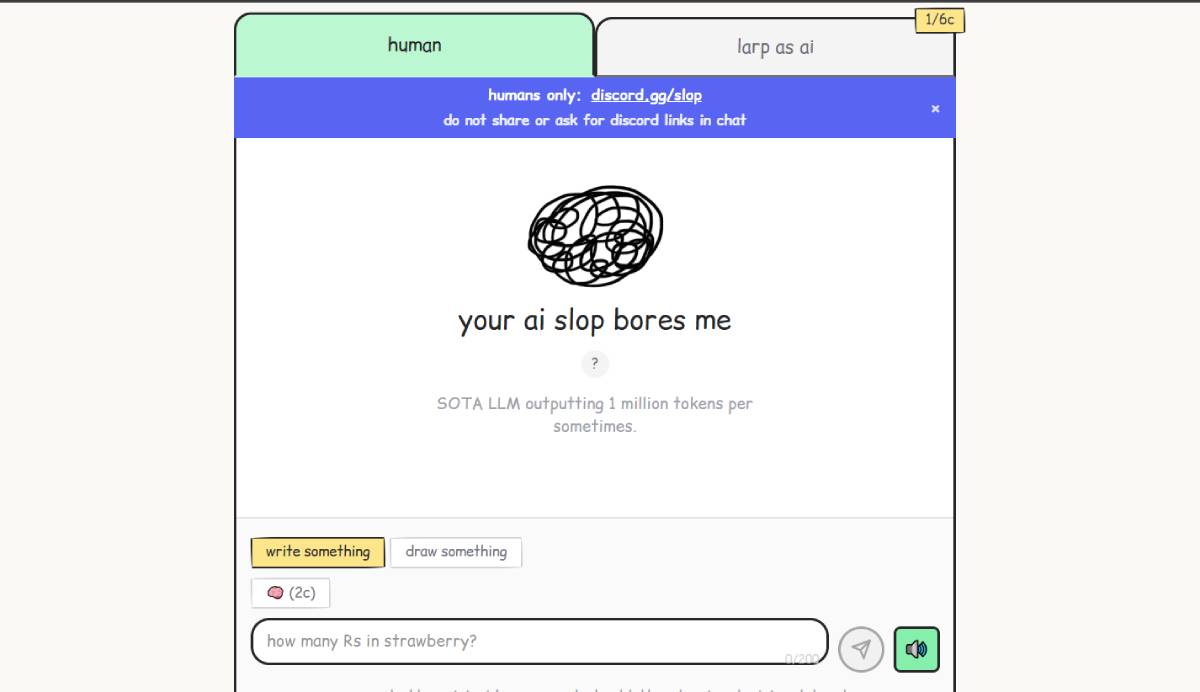

Online communities and platforms are witnessing a surge in users pretending to be AI chatbots, often mimicking the tone, structure, and behavior of systems like ChatGPT. The trend is driven by humor, creativity, and curiosity, with users engaging in roleplay scenarios and interactive exchanges.

Key stakeholders include social media users, content creators, and platforms hosting these interactions. The phenomenon underscores how deeply AI frameworks have penetrated everyday communication styles. It also raises questions about authenticity, as distinguishing between human-generated and AI-generated responses becomes increasingly difficult in digital environments.

The development aligns with a broader trend across global markets where AI platforms are reshaping communication, creativity, and online interaction. Chatbots and generative AI tools have become widely accessible, influencing how people write, respond, and engage online.

Companies such as OpenAI and Google have popularized conversational AI, making chatbot-style interactions a familiar part of daily life. Historically, humans have adapted to new technologies by incorporating them into culture and humor, from early internet memes to social media trends.

This latest trend reflects a deeper integration of AI frameworks into human behavior, where users not only consume AI outputs but also emulate them, blurring the boundaries between human and machine communication.

Cultural analysts suggest that the trend represents a form of digital adaptation, where users internalize and replicate AI communication patterns. Experts note that this behavior reflects both fascination with and normalization of AI technologies.

Some researchers argue that mimicking AI responses can highlight the strengths and limitations of current AI systems, often exposing repetitive or overly formal patterns. At the same time, experts caution that widespread imitation could contribute to confusion in digital spaces, particularly when users cannot easily distinguish between human and AI-generated content. There is also growing discussion about the psychological and social implications of interacting in AI-like ways, including how it may influence communication norms and expectations.

For global executives, the trend highlights the cultural impact of AI adoption and the importance of maintaining trust in digital interactions. Companies may need to invest in tools that clearly differentiate between human and AI-generated content.

For platforms, the rise of AI-like human behavior could complicate moderation and content verification processes. Policymakers may also need to consider guidelines around transparency and disclosure in AI-related interactions. The phenomenon underscores how AI platforms are not only transforming industries but also reshaping human behavior and communication norms.

Looking ahead, the blending of human and AI communication styles is expected to deepen as AI tools become more advanced and widespread. Decision-makers will monitor how this trend affects trust, authenticity, and user engagement across digital platforms. The key question remains how societies will adapt to a future where the line between human and AI interaction becomes increasingly indistinct.

Source: NPR

Date: April 2026